コンセプトワークのパイプラインの設計の難しさ

Difficulty in designing pipeline of concept work

(株式会社ポリゴン・ピクチュアズ / スタジオフォンズ)

(Polygon Pictures Inc. / Studio Phones)

本資料は会合後にディスカッションされた内容を踏まえ記載しています。今後の会合の状況を見つつ加筆修正を含めブラッシュアップを進めていく予定です。

This document has been redacted based on post-seminar discussions. We plan to add more content and make further revisions based on the situation of future seminars.

translated by PPI Translation Team

■概要

■Overview

一般的にプリプロダクションと呼ばれる、作品の企画、ストーリー開発、コンセプトアート、各種設定、キャラクターデザイン、カラースクリプトといった設計をこなう工程がプロダクションを開始する前に行われます。

プロダクションではそれらの設計をもとに実際の制作を行なっていくわけですが、プリプロダクションで作成されるアセットデータやそれに付随するメタ情報も、プロダクション時と同様に管理する必要があり、複数人のアーティストによる作業となるため、それらの作業を円滑におこなえるパイプラインシステムが必要となります。

しかしながら、プリプロダクションの過程はプロダクションとは異なり、そもそも不確定な要素が多く、それらを頻度の高い試行錯誤を繰り返しながら、共同作業によって設計を確定させていく作業となります。

限られた時間のなかで、いかにそのイテレーション頻度を多く確保するかという点はアーティストにとっても重要で、それらの作業になるべく集中して取り組むためのワークフローが必要となり、結果それが作品のクオリティに影響を与えるひとつの要因となり得ます。

一般的な映像制作のワークフローはウォーターフォール型か、またはそれに近しい設計となっており、そこで培われたパイプラインシステムをプリプロダクションの工程に適用することは、ワークフローの性質が大きく異なるため困難となります。

そのため、プリプロダクションにおいては、よりアジャイル型のワークフローが求められ、それらに適したパイプラインシステムの構築が不可欠となります。

Before production begins, a process known as pre-production generally takes place. Pre-production is the stage in which the project is designed through planning, story development, concept art, various settings, character design, and color scripts.

Given that all of the designing mentioned above will become the foundation for carrying out production, the asset data and accompanying meta information created during pre-production will need to be managed in the same way as during the actual production. As multiple artists will be involved during pre-production, a pipeline system which allows for them to work on their tasks smoothly would be essential.

However, the pre-production stage differs from production in the sense that there are many factors that are uncertain to begin with, which is why various trial-and-errors would be executed frequently as team members work collaboratively towards establishing the design. Given that time is limited, how often the iterations can be accomplished is a crucial point for the artists. For this reason, a workflow which enables the artists to concentrate as much as possible on their tasks becomes necessary. Ultimately, this may become one of the factors that ends up affecting the quality of the project itself.

The standard video production workflow follows the waterfall model or another design approach similar to it. The pipeline system cultivated through this production workflow would prove difficult to implement onto the pre-production stage as the characteristics of the two workflows greatly differ.

Hence, a more agile workflow is required for pre-production, and the construction of a pipeline system specifically catered to it is imperative.

■プリプロダクションでのワークフロー

■Pre-production workflow

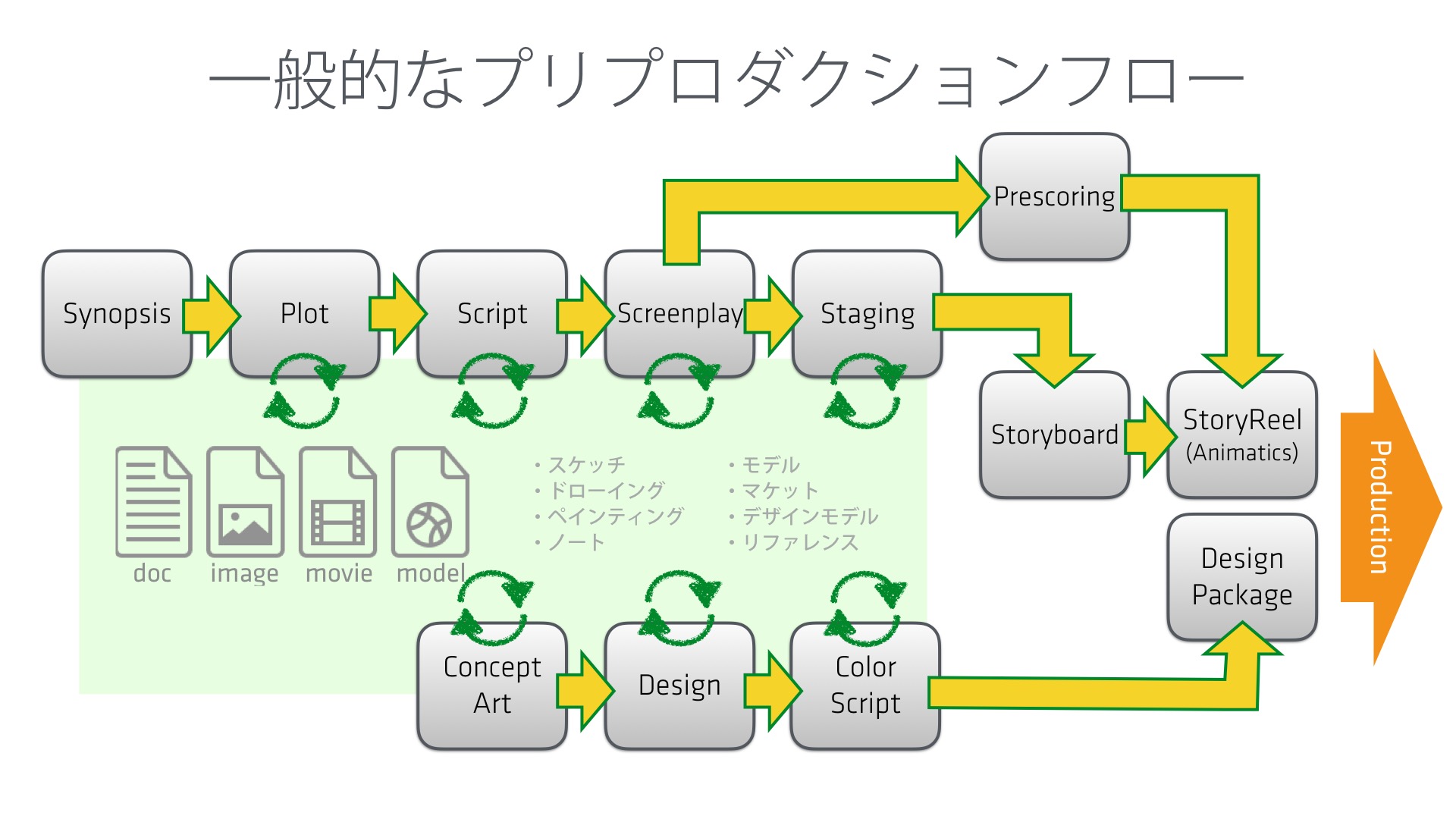

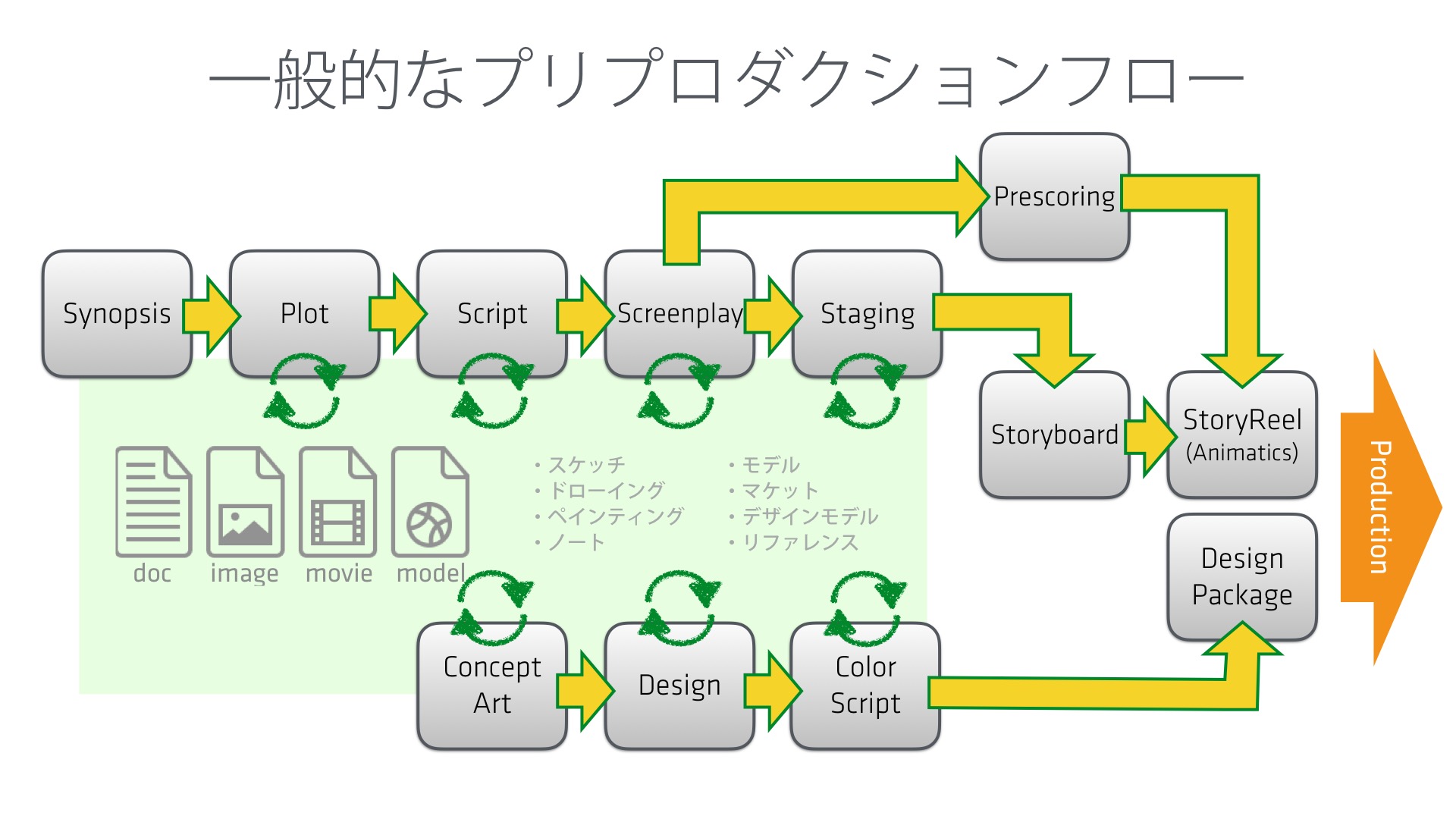

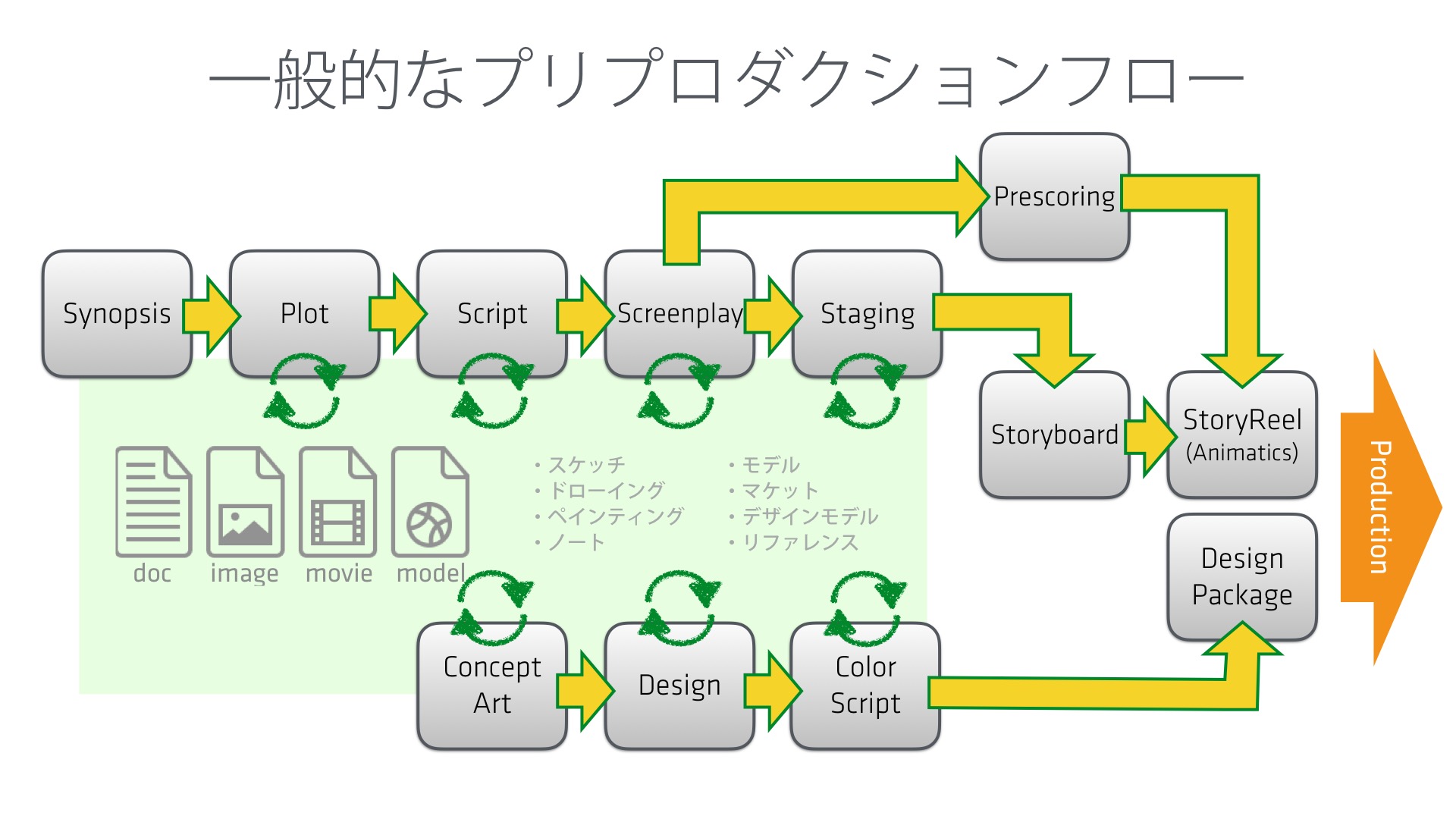

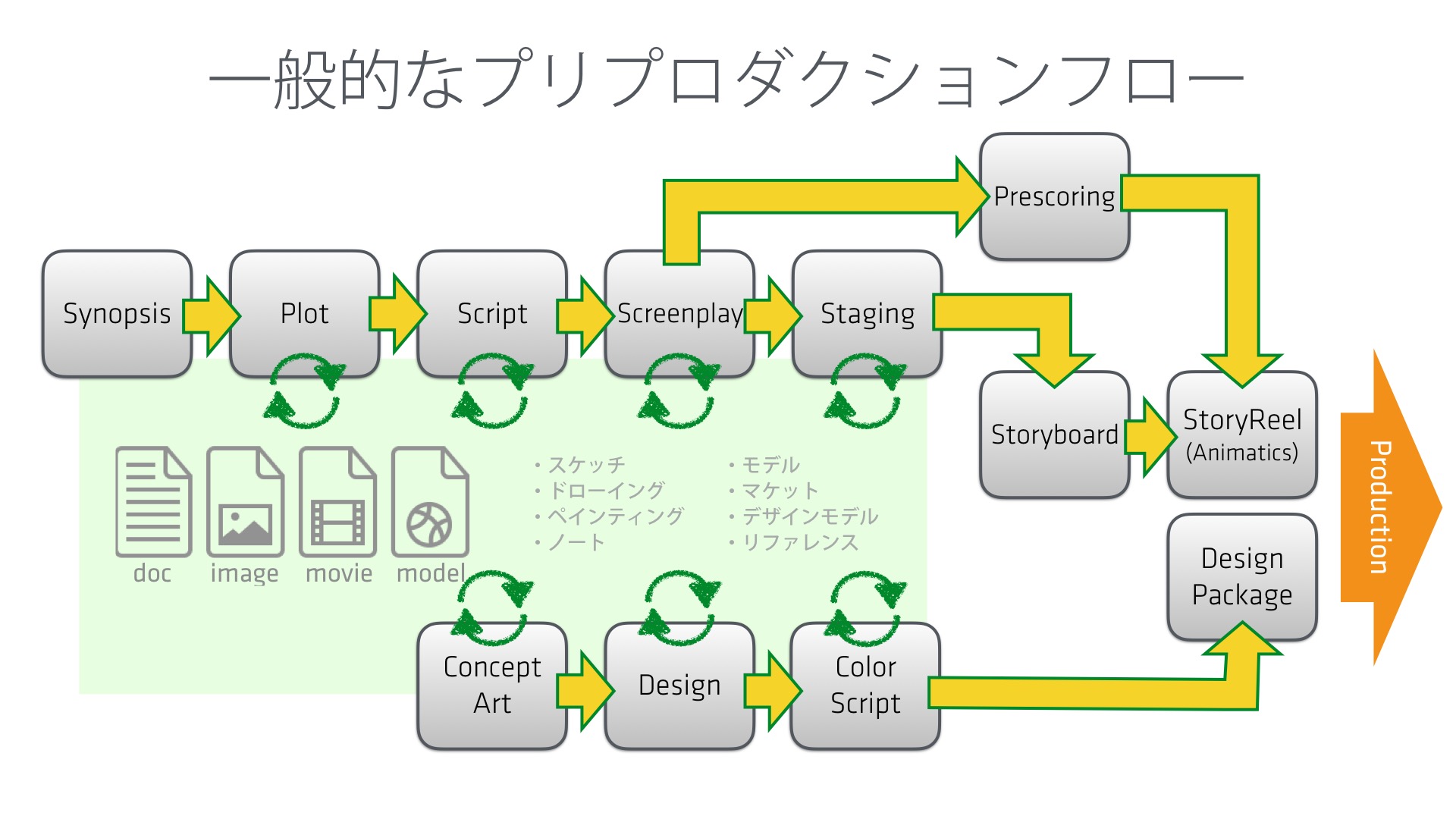

一般的プリフロダクションのワークフローは以下のようになります。

プリプロダクションでは段階的なストーリー開発から始まり、何度も試行錯誤しながら、それらを脚本や台本へと落とし込んでいきます。またそれに並行するかたちで、コンセプトアートやデザイン、カラースクリプトなど、

ストーリー開発が進むにしたがって、それらの作業も進行していきます。

作業中はイテレーションを繰り返すかたちでブラッシュアップしてき、ときにはそのアセットそのものがオミットされて、一から検討しなおすといったことも発生します。

このように、プリプロダクションではプロダクションに渡す最終的なパッケージデータになるまで、試行錯誤を繰り返すこととなり、その膨大な情報と散漫なデータを管理していくことによって、より効率的な作業を行えるようになります。これらが上手く管理できない場合、どれが最終的に採用されたデータなのか、そのデータはディレクターの承認がとれているものなのか、といったことが不明瞭になり、後工程であるプロダクションにまでその混乱の影響を与えてしまうこととなります。

またこれらのデータを管理するうえで、パイプラインや仕組みが存在しないとその管理が属人的となってしまい、ワークフロー上ボトルネックとなるケースも想定されます。

Shown below is the standard pre-production workflow:

Pre-production begins with staged story development. Through numerous trial-and-errors, the developed story is molded into a scenario or script. In addition, the more the story is developed, the more other tasks such as creating concept art, designs, and color scripts can move forward.

While the work above is in progress, the output is continuously brushed up through various iterations. At times, this results in entire assets being omitted and having to be redesigned from scratch.

In this manner, trial-and-errors are repeated during pre-production until the packaged data to be delivered to production is finalized. Therefore, through proper management of the colossal amount of information and scattered data, the work would be able to be done in a more efficient manner. On the other hand, when there is no proper management, it becomes unclear which data was selected for the finalized package, and whether or not that data had already been approved by the director. This confusion will end up negatively impacting the process which follows; production.

When there is no pipeline or system established, the management of this previously mentioned data would become dependant on the individual. Therefore, it can be predicted in certain cases that the task of data management itself would end up becoming the bottleneck within the workflow.

■プリプロダクションでのパイプラインシステムの例

■Example of A pipeline system for pre-production

ここではポリゴン・ピクチュアズでインハウス開発している「Pinoco」というプリプロダクションのシステムを例に、その内容を紹介していきたいと思います。

プリプロダクションでは多くの画像、動画、音声、ドキュメント、3Dモデルなどのデータが作成されますが、Pinocoはそれらのデータを管理するためのプリプロダクション専用のパイプラインシステムとなります。データのリビジョンやメタ情報、ステータス情報を一元的に管理することができ、それらにタグ付けを行うことにでデータを整理していきます。ブラウザベースで動作するシステムとなり、ドラッグ&ドロップといった操作でなるべく直感的に利用できることを意識して開発されています。

またアジャイル的な試行錯誤のなかでデータが更新されていくワークフローを前提とした設計となっており、さまざまな軸でデータを検索出来るようになっています。